Key Takeaways

89% of enterprises now use 2+ cloud providers, averaging 3.4 platforms per organization.

Cloud spend exceeds forecasts by 20-30%; multi-cloud amplifies this through egress fees and duplication.

A deliberate workload placement strategy can reduce cloud costs by 30-40%.

FinOps is essential: mature practices achieve 20-30% better unit economics.

Unified observability and AIOps prevent multi-cloud from becoming multi-chaos.

Multi-cloud is no longer a strategy debate. It is an operational reality. According to Flexera's 2025 State of the Cloud Report, 89% of enterprises now use two or more cloud providers, with the average large organization running workloads across 3.4 cloud platforms. But running multi-cloud and running it well are very different things. Most enterprises discover that their multi-cloud environments are more expensive, more complex, and harder to secure than they anticipated.

The promise of multi-cloud -- avoiding vendor lock-in, optimizing price-performance, and leveraging best-of-breed services from each provider -- is real. But achieving that promise requires deliberate architectural decisions, robust governance, and a level of operational maturity that many organizations have not yet developed.

This article examines the strategies, patterns, and practices that enterprises are using to optimize multi-cloud architectures for both cost efficiency and scalable performance.

Why Multi-Cloud Gets Expensive Fast

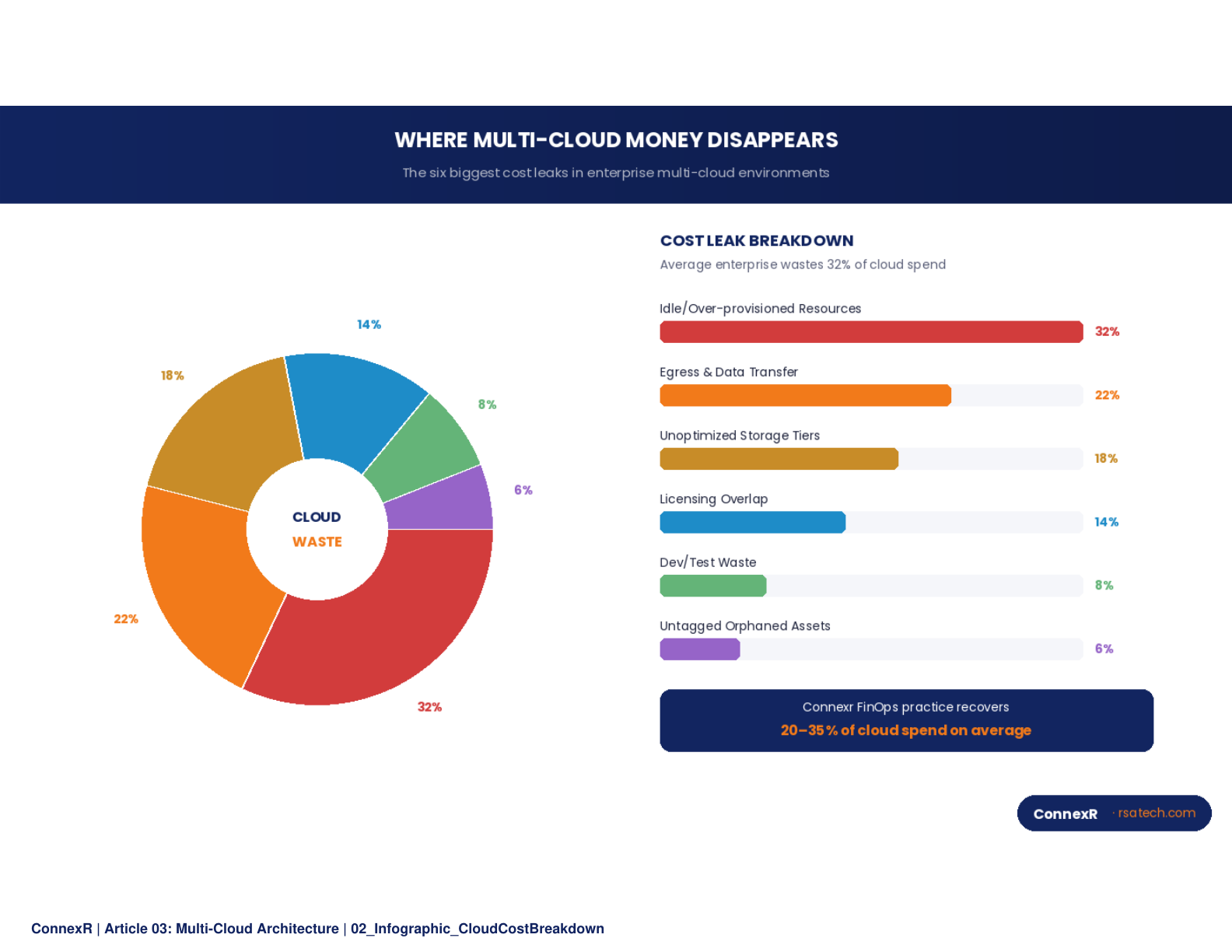

The number one complaint from enterprises running multi-cloud environments is runaway costs. Cloud spend consistently exceeds forecasts by 20 to 30%, and multi-cloud makes the problem worse for several reasons.

Data Egress Costs

Cloud providers charge for data that leaves their network. In a multi-cloud architecture where workloads on AWS need to communicate with services on Azure or data stored in GCP, egress charges accumulate rapidly. A data pipeline that moves 10 TB per month between clouds can easily generate $800 to $1,200 in egress fees alone, a cost that is invisible in architecture diagrams but very visible in monthly invoices.

Duplicated Infrastructure

Without deliberate governance, teams across the organization tend to provision similar infrastructure on different clouds. One team stands up a Kubernetes cluster on EKS, another on AKS, a third on GKE. Each cluster requires its own monitoring, security tooling, and operational expertise. The result is three times the operational overhead for what is fundamentally the same capability.

Idle and Over-Provisioned Resources

Multi-cloud multiplies the challenge of right-sizing resources. When an organization runs a single cloud, identifying idle instances and over-provisioned databases is straightforward. When the same organization runs three clouds, visibility fragments across three different cost management interfaces, three different instance families, and three different pricing models.

Lack of Centralized Governance

Perhaps the most significant cost driver is the absence of centralized cloud financial management. When each business unit or project team manages its own cloud spend independently, there is no mechanism for cross-cloud optimization: no way to leverage reserved capacity, committed-use discounts, or spot instance strategies across the entire portfolio.

"The enterprises that save the most on multi-cloud are those that treat FinOps as a discipline, not a dashboard."

Architectural Patterns for Cost-Efficient Multi-Cloud

Optimizing multi-cloud costs requires both architectural changes and operational discipline. The following patterns represent proven approaches that enterprises are using to reduce spend without sacrificing capability.

Pattern 1: Workload Placement Strategy

Not every workload belongs on every cloud. A workload placement strategy assigns each application or service to the cloud provider where it runs most cost-effectively, based on a combination of compute requirements, data locality, managed service availability, and pricing.

For example, GPU-intensive AI training workloads may run most cost-effectively on GCP with its TPU offerings. Windows-based enterprise applications may benefit from Azure's licensing advantages. High-throughput microservices architectures may favor AWS's mature container and serverless ecosystem.

The key is making workload placement a deliberate architectural decision rather than an accident of which team provisioned which cloud account first.

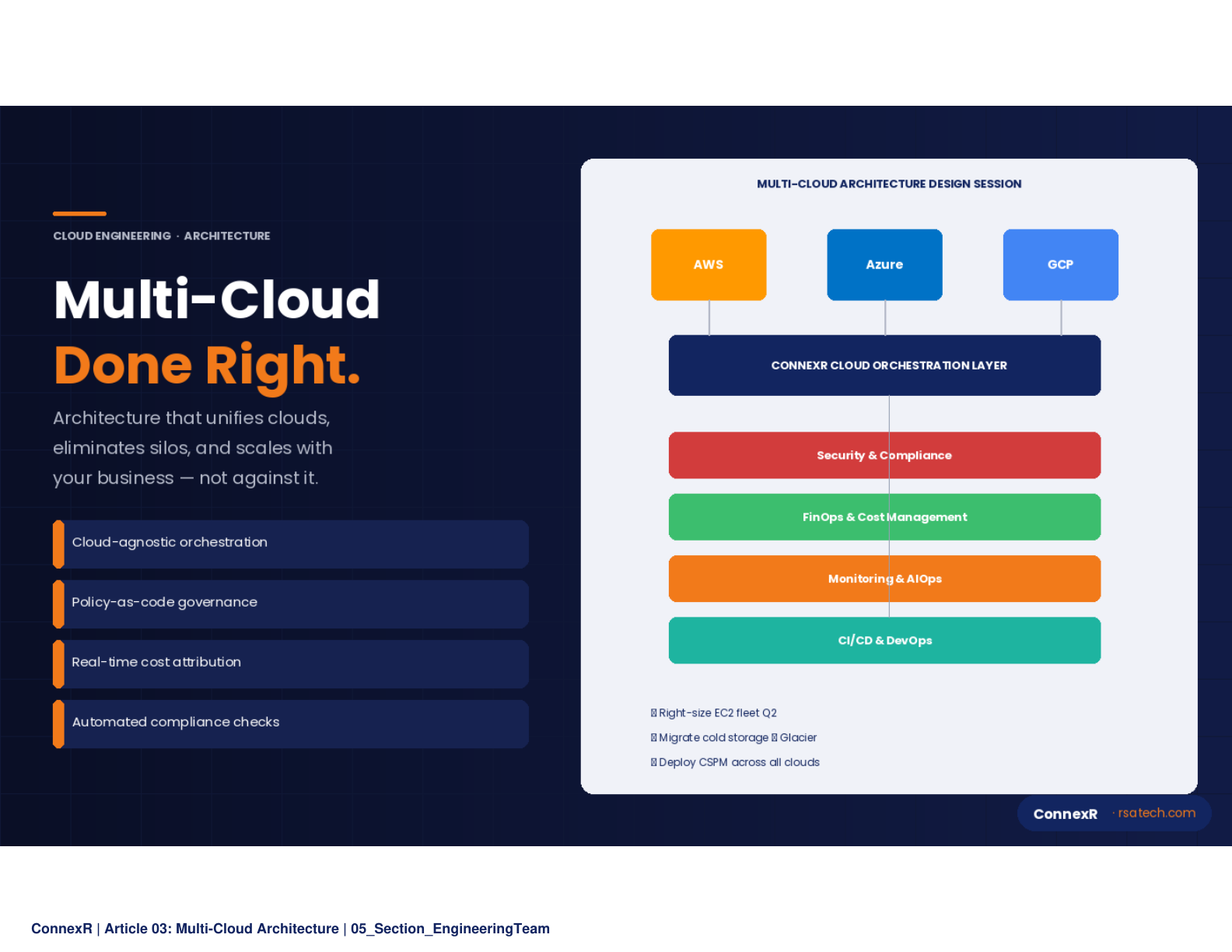

Pattern 2: Cloud-Agnostic Orchestration Layer

A cloud-agnostic orchestration layer (typically built on Kubernetes with a service mesh like Istio or Linkerd) abstracts application deployment from the underlying cloud provider. This enables workload portability, consistent security policies, and the ability to shift workloads between clouds in response to pricing changes or capacity constraints.

The orchestration layer should also include infrastructure-as-code (IaC) tooling like Terraform, Pulumi, or Crossplane that can provision and manage resources across all cloud providers through a unified workflow. This eliminates the duplication of expertise required to manage CloudFormation, ARM templates, and Deployment Manager independently.

Pattern 3: Intelligent Data Tiering

Data placement and movement are the largest controllable cost factors in multi-cloud environments. An intelligent data tiering strategy involves:

- Keeping hot data as close to compute as possible to minimize egress

- Using object storage lifecycle policies to automatically move aging data to lower-cost tiers

- Implementing a data lakehouse architecture that provides a unified query layer across data stored on multiple clouds

- Caching frequently accessed cross-cloud data at the network edge to reduce redundant transfers

For organizations processing large volumes of data, the difference between a naive data architecture and an optimized one can represent 40 to 60% of total cloud data costs.

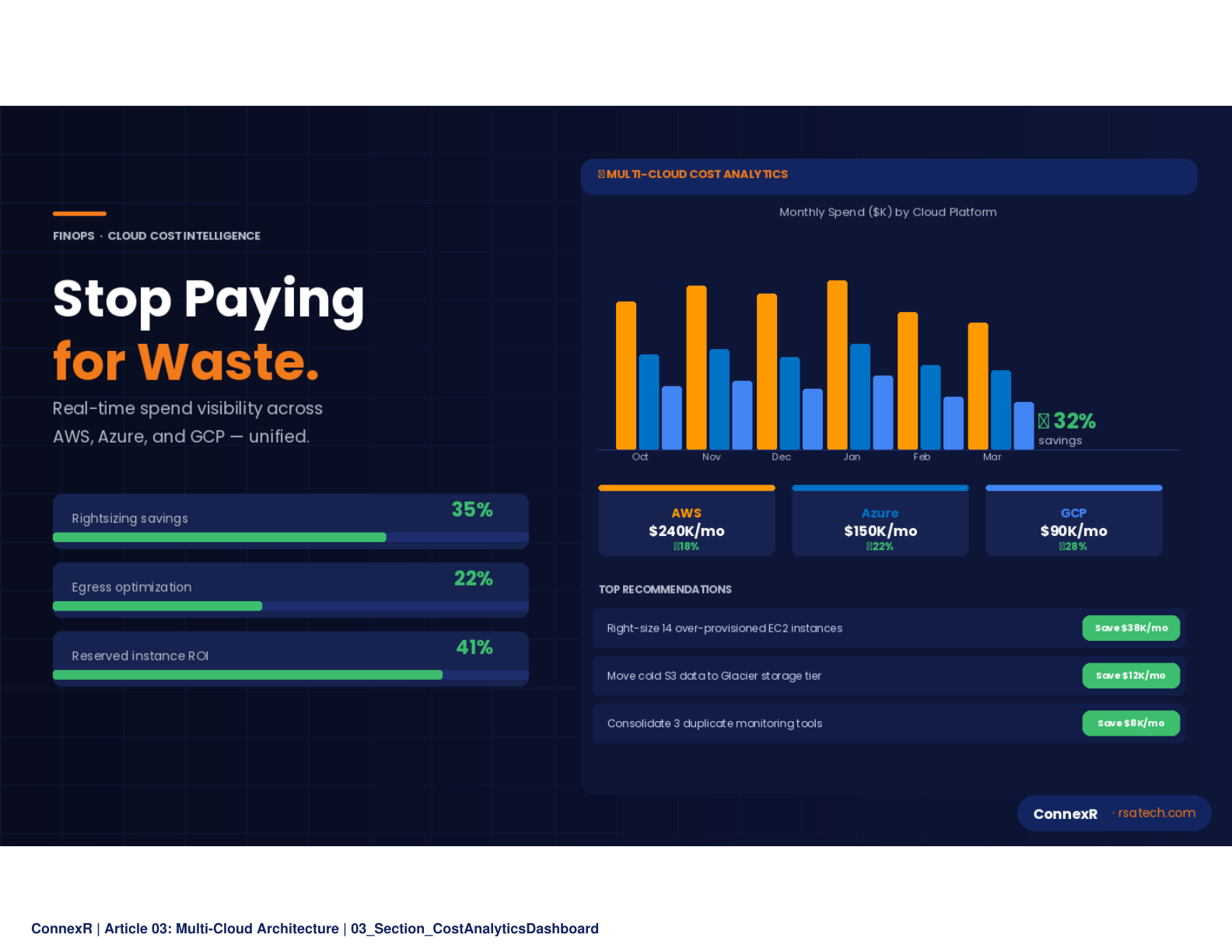

Pattern 4: FinOps as a Discipline

Cloud Financial Operations (FinOps) has matured from a niche practice into an essential organizational capability. A mature FinOps practice for multi-cloud environments includes:

- A centralized cost management platform that aggregates spend across all cloud providers

- Automated policies for resource tagging, right-sizing recommendations, and idle resource cleanup

- Committed-use discount optimization across the entire portfolio

- Chargeback and showback models that make cloud costs transparent to the teams that generate them

- Regular cost optimization reviews that identify architectural changes with the highest savings potential

The FinOps Foundation estimates that organizations with mature FinOps practices achieve 20 to 30% better unit economics on their cloud spend compared to those without.

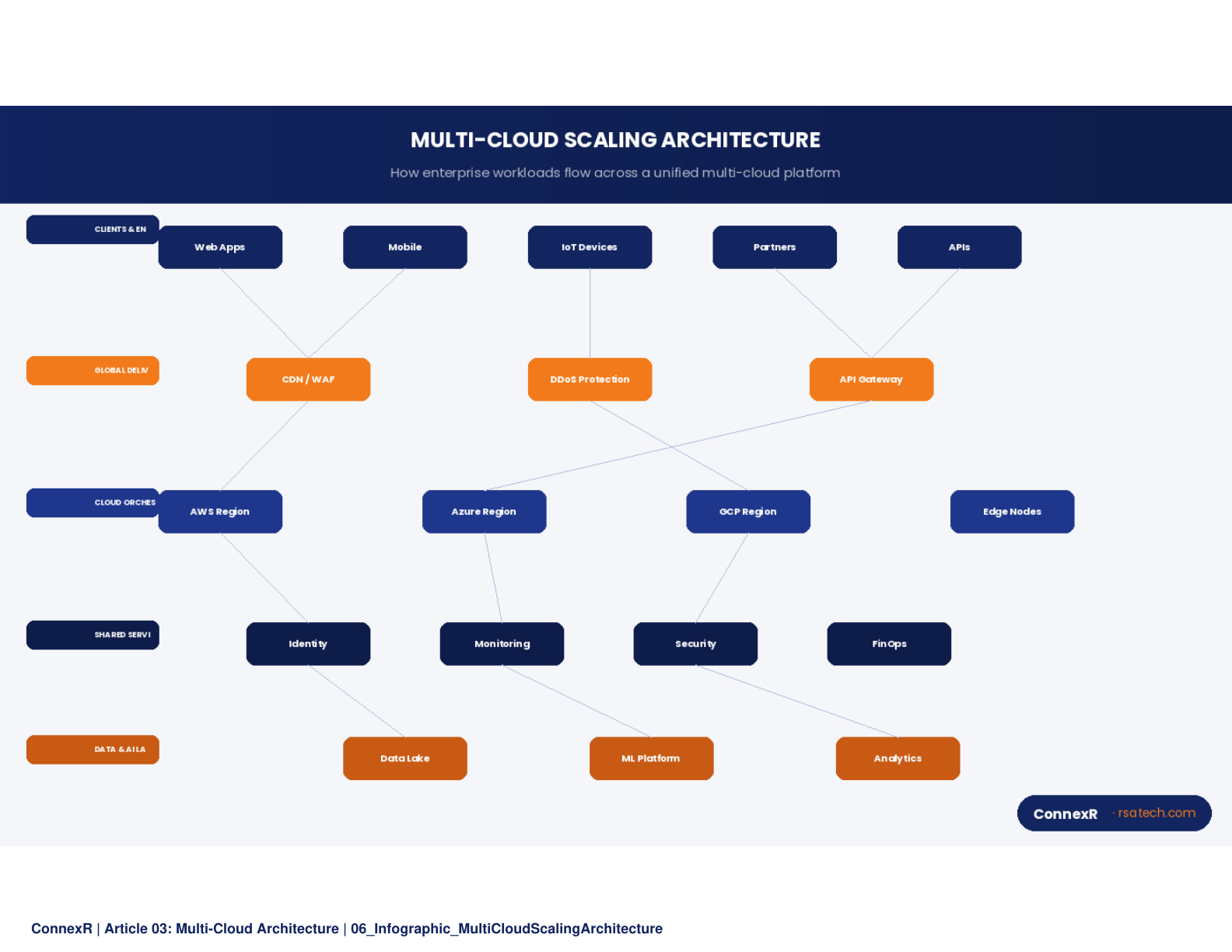

Scaling Multi-Cloud Without Scaling Complexity

Cost optimization is only half the equation. Multi-cloud architectures must also scale efficiently to handle growing workloads, traffic spikes, and geographic expansion.

Unified Observability Across Clouds

You cannot scale what you cannot see. A unified observability platform that aggregates metrics, logs, and traces across all cloud environments is foundational to multi-cloud scalability. This platform should provide a single pane of glass for monitoring application performance, infrastructure health, and cost metrics across AWS, Azure, and GCP.

AI-powered observability (AIOps) takes this further by correlating signals across clouds to detect anomalies, predict capacity bottlenecks, and automate remediation. When a latency spike on one cloud is caused by a resource constraint on another, only a cross-cloud observability system can identify the root cause.

Automated Scaling Policies

Each cloud provider offers auto-scaling capabilities, but configuring them consistently across providers requires abstraction. Kubernetes Horizontal Pod Autoscaler (HPA) and Vertical Pod Autoscaler (VPA) provide cloud-agnostic scaling for containerized workloads. For non-containerized workloads, infrastructure-as-code templates should encode scaling policies that map to each provider's native auto-scaling mechanisms.

The scaling strategy should also account for cross-cloud failover: the ability to shift traffic from one cloud to another in the event of a regional outage or capacity limitation. This requires global load balancing, multi-cloud DNS management, and pre-provisioned capacity on the failover target.

Multi-Cloud Security at Scale

Security is the dimension where multi-cloud complexity creates the most risk. Each cloud provider has its own identity model, network security architecture, and compliance tooling. Maintaining consistent security policies across all three requires a security abstraction layer that enforces:

- Uniform identity and access management across clouds

- Consistent network security policies through a cloud-agnostic firewall or ZTNA solution

- Centralized secrets management that works across all environments

- Unified compliance monitoring that maps controls from SOC 2, HIPAA, ISO 27001, and other frameworks to the native security configurations of each cloud

Without this abstraction, organizations end up with three different security postures, each with its own gaps and blind spots.

Multi-Cloud by the Numbers

| Metric | Value |

|---|---|

| Enterprises using 2+ cloud providers | 89% |

| Average cloud platforms per enterprise | 3.4 |

| Typical cloud spend overrun | 20-30% |

| Cost savings with mature FinOps | 20-30% |

| Data cost reduction with intelligent tiering | 40-60% |

Building a Multi-Cloud Center of Excellence

The most successful multi-cloud organizations establish a Cloud Center of Excellence (CCoE), a cross-functional team responsible for defining architectural standards, evaluating new cloud services, managing vendor relationships, and driving continuous optimization.

The CCoE operates as an internal consulting function that provides:

- Standardized reference architectures for common workload patterns

- A cloud services catalog that maps approved services across all providers

- Training and enablement programs that build cloud fluency across engineering teams

- A governance framework that balances developer agility with cost and security guardrails

For organizations that lack the internal capacity to build a CCoE, partnering with a managed cloud services provider can accelerate multi-cloud maturity while reducing the operational burden on internal teams.

The Path Forward

Multi-cloud architecture optimization is not a one-time project. It is a continuous discipline that evolves as cloud providers release new services, pricing models change, and organizational needs shift. The enterprises that extract the most value from multi-cloud are those that treat it as a strategic capability requiring dedicated attention to architecture, governance, and operations.

The reward is substantial: reduced cloud spend, improved application performance, greater resilience, and the organizational agility to adopt the best technology for each use case without being locked into a single vendor's roadmap.

Conclusion

ConnexR designs, builds, and operates multi-cloud architectures across AWS, Azure, and GCP. Our managed cloud services and AIOps capabilities help enterprises optimize cost and performance across their entire cloud portfolio -- without adding internal headcount.