Nearly every Fortune 500 company has launched a generative AI pilot program, yet fewer than 20% have moved beyond experimentation into production-grade deployments. The gap between a compelling demo and an enterprise-ready system remains vast, and closing it requires far more than selecting the right large language model. Organizations that treat generative AI as a technology project rather than a strategic capability transformation are discovering that hype alone does not deliver ROI.

The generative AI landscape has matured significantly since the initial wave of excitement in 2023. Enterprise leaders now recognize that lasting value comes not from isolated chatbot experiments, but from deeply integrated systems that augment decision-making, accelerate workflows, and create new competitive advantages. The organizations pulling ahead are those that have built deliberate frameworks for moving from proof-of-concept to production at scale.

The Pilot-to-Production Gap

The vast majority of enterprise generative AI initiatives stall in the pilot phase. A McKinsey survey found that while 92% of companies plan to increase their AI investments, only a fraction have scaled beyond initial experiments. The reasons are systemic rather than technical. Pilots typically run in sandboxed environments with curated data, limited users, and relaxed compliance requirements. Production deployments demand integration with enterprise data pipelines, adherence to security and governance frameworks, reliable performance under load, and clear accountability structures.

The pilot-to-production gap is further widened by organizational dynamics. Business units launch independent experiments without coordinating with IT or data teams. Models trained on small datasets produce impressive demos that fall apart when exposed to the diversity and noise of real-world data. Without a centralized strategy, enterprises end up with dozens of disconnected pilots and no clear path to operational value.

Key Challenges in Enterprise GenAI Deployment

Data governance and privacy

Enterprise data is the fuel that makes generative AI valuable, but it also introduces the most significant risks. Production LLM deployments must navigate complex data classification requirements, access controls, and regulatory frameworks such as GDPR, CCPA, HIPAA, and SOC 2. Organizations need robust data lineage tracking to understand which data was used for training or retrieval, who has access, and how outputs are being consumed downstream. Without strong governance, a single data leak through an AI system can expose sensitive customer information, proprietary intellectual property, or regulated financial data.

Hallucination mitigation

Large language models generate plausible-sounding text that may be factually incorrect, a phenomenon known as hallucination. In consumer applications, this is an inconvenience. In enterprise contexts involving legal documents, financial reports, medical records, or engineering specifications, hallucinations can carry serious consequences. Leading organizations are implementing multi-layered mitigation strategies: retrieval-augmented generation to ground outputs in verified data, confidence scoring to flag uncertain responses, human-in-the-loop review workflows for high-stakes decisions, and automated fact-checking pipelines that cross-reference outputs against authoritative sources.

Cost management at scale

The economics of generative AI shift dramatically between pilot and production. A proof-of-concept serving 50 internal users might cost a few hundred dollars per month in API calls. Scaling that same application to 50,000 users across the organization can produce monthly bills exceeding $500,000 without careful optimization. Enterprises must develop sophisticated cost management strategies including prompt optimization, caching architectures, model selection policies that match task complexity to model capability, and hybrid approaches that route simple queries to smaller, cheaper models while reserving frontier models for complex reasoning tasks.

Integration with legacy systems

Most enterprises run on heterogeneous technology stacks that have evolved over decades. Integrating generative AI with legacy ERP systems, mainframe databases, and proprietary workflows requires careful API design, data transformation layers, and often significant middleware development. The most successful deployments treat integration as a first-class engineering challenge rather than an afterthought, building abstraction layers that decouple AI capabilities from specific infrastructure dependencies.

A Framework for Enterprise GenAI Maturity

Organizations benefit from a structured maturity model that provides clear milestones and decision criteria for advancing their generative AI capabilities. Based on patterns observed across successful enterprise deployments, we propose a four-stage framework.

Stage 1: Pilot

The organization runs isolated experiments with generative AI, typically in low-risk use cases such as internal knowledge search, draft content generation, or code assistance. Success criteria focus on demonstrating feasibility and gathering user feedback. Governance is informal, and costs are managed through limited access. Most enterprises today remain in this stage.

Stage 2: Integration

Successful pilots are connected to enterprise data sources and embedded into existing workflows. This stage requires formal data governance policies, security reviews, and integration with identity and access management systems. The focus shifts from "can we do this?" to "can we do this reliably, securely, and at reasonable cost?" Organizations at this stage typically have 3-5 production use cases serving defined user populations.

Stage 3: Optimization

The organization has established a platform approach to generative AI, with shared infrastructure, reusable components, and centralized model management. Cost optimization becomes a primary focus, with teams implementing caching, prompt engineering best practices, and model routing strategies. Performance is measured through business KPIs rather than technical metrics alone. Enterprises at this stage report 30-60% cost reductions compared to their initial production deployments.

Stage 4: Autonomous

Generative AI operates as a core enterprise capability with agentic workflows that can plan, execute, and self-correct across complex multi-step processes. Human oversight shifts from task-level review to strategic governance. The AI systems continuously learn from organizational feedback loops and adapt to changing business requirements. Fewer than 5% of enterprises have reached this stage, but it represents the long-term competitive target.

Real-World Deployment Patterns

Retrieval-Augmented Generation (RAG)

RAG has emerged as the dominant architecture for enterprise generative AI because it directly addresses the hallucination problem while keeping data current without expensive retraining. In a RAG system, user queries are first used to retrieve relevant documents from a vector database or search index, and those documents are then provided as context to the language model. This grounds the model's outputs in verified enterprise data. Leading implementations use hybrid retrieval strategies combining dense vector search with traditional keyword matching, chunking strategies optimized for their specific document types, and re-ranking models that improve the relevance of retrieved passages before they reach the LLM.

Fine-tuning for domain specialization

While RAG handles knowledge grounding, fine-tuning addresses behavioral specialization. Organizations fine-tune models to match their specific terminology, communication style, and reasoning patterns. A financial services firm might fine-tune a model on regulatory filings and compliance documentation so it produces outputs that align with industry conventions. Fine-tuning is most effective when combined with RAG: the fine-tuned model understands the domain's language and conventions, while RAG ensures it references current and accurate information. The cost of fine-tuning has dropped significantly, with techniques like LoRA and QLoRA enabling domain-specific models at a fraction of previous costs.

Agent architectures

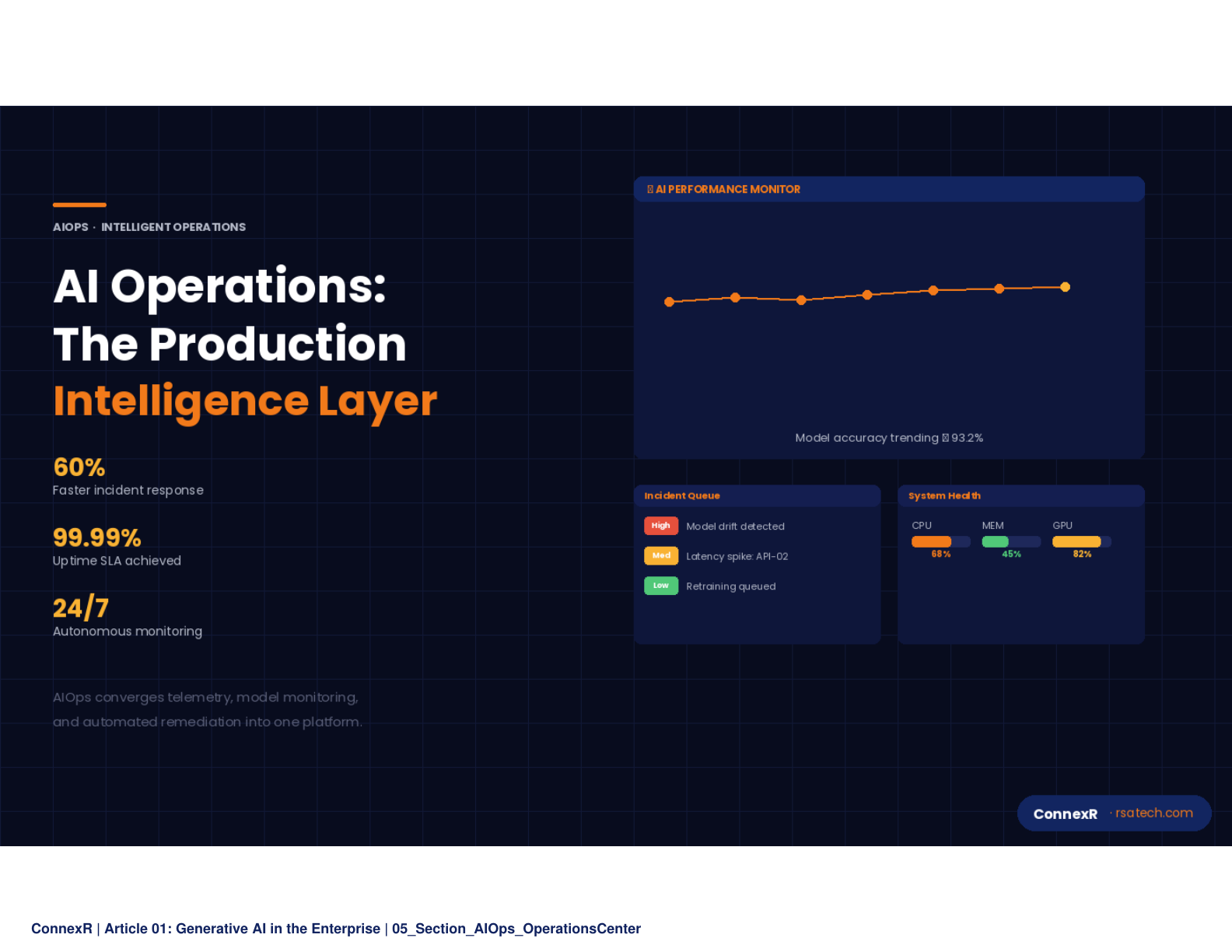

The most advanced enterprise deployments are moving toward agentic architectures where LLMs serve as reasoning engines that can plan and execute multi-step workflows. An agent might receive a request to "prepare the quarterly business review," then autonomously query the data warehouse, generate charts, draft narrative summaries, compile them into a presentation, and route the output for human review. These architectures require robust tool-use frameworks, error handling, and guardrails that prevent agents from taking unauthorized actions. Organizations deploying agents successfully invest heavily in observability, building detailed logging and monitoring systems that provide full transparency into the agent's reasoning chain and action history.

Measuring ROI for Enterprise GenAI

Traditional ROI frameworks struggle to capture the full value of generative AI because benefits often manifest as improved quality, faster cycle times, and enhanced decision-making rather than direct cost displacement. Effective measurement requires a multi-dimensional approach.

Productivity metrics track time savings and throughput improvements. Organizations report that developers using AI-assisted coding tools complete tasks 25-50% faster, customer service teams resolve tickets in half the time with AI-drafted responses, and legal teams review contracts 60% more quickly with AI-powered analysis.

Quality metrics measure error reduction, consistency improvements, and compliance adherence. Enterprises using AI for document review have seen error rates drop by 40%, while marketing teams report significantly improved content consistency across channels.

Strategic metrics capture competitive advantages that are harder to quantify but often represent the greatest long-term value. These include speed to market for new products, ability to personalize customer experiences at scale, and the capacity to process and act on information that would be impossible to analyze manually.

The most rigorous enterprises establish baseline measurements before deployment, run controlled experiments comparing AI-augmented and traditional workflows, and track metrics over multi-quarter periods to account for learning curves and adoption dynamics.

Key Takeaways

The pilot-to-production gap remains the defining challenge for enterprise generative AI, with fewer than 20% of organizations successfully scaling beyond experimentation.

Data governance, hallucination mitigation, cost management, and legacy integration are the four pillars that determine whether enterprise GenAI deployments succeed or stall.

A structured maturity framework (Pilot, Integration, Optimization, Autonomous) provides clear milestones for advancing from initial experiments to enterprise-grade AI capabilities.

RAG, fine-tuning, and agent architectures represent the three dominant deployment patterns, each addressing different enterprise requirements for accuracy, specialization, and autonomy.

ROI measurement must go beyond cost savings to include productivity, quality, and strategic metrics tracked over multi-quarter periods.

Move beyond the hype. The enterprises gaining competitive advantage from generative AI are those with deliberate strategies for governance, architecture, and scale. Start with a clear maturity assessment and build your roadmap from production requirements, not pilot enthusiasm.